Fine-tuning a GPT — Prefix-tuning, by Chris Kuo/Dr. Dataman

In this and the next posts, I will walk you through the fine-tuning process for a Large Language Model (LLM) or a Generative Pre-trained Transformer (GPT). There are two prominent fine-tuning…

List: LLMs - FINE TUNING, Curated by scitechtalk tv

Time-LLM: Reprogram an LLM for Time Series Forecasting

Fine-tuning GPT-3 Using Python to Create a Q&A Assistant

The data that those large language models were built on

elvis on X: Okay, this is awesome! Instruction Tuning with GPT-4

Guide to fine-tuning Text Generation models: GPT-2, GPT-Neo and T5, by Mohit Mayank

Time-LLM: Reprogram an LLM for Time Series Forecasting

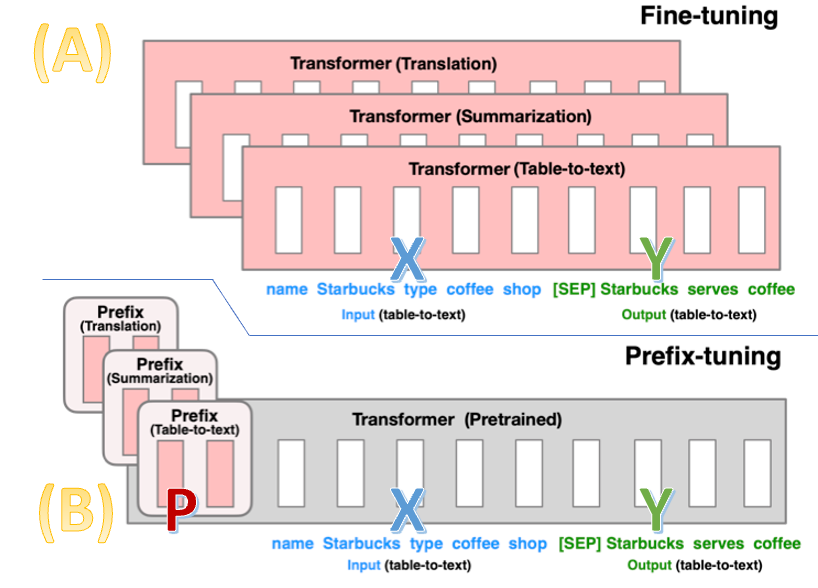

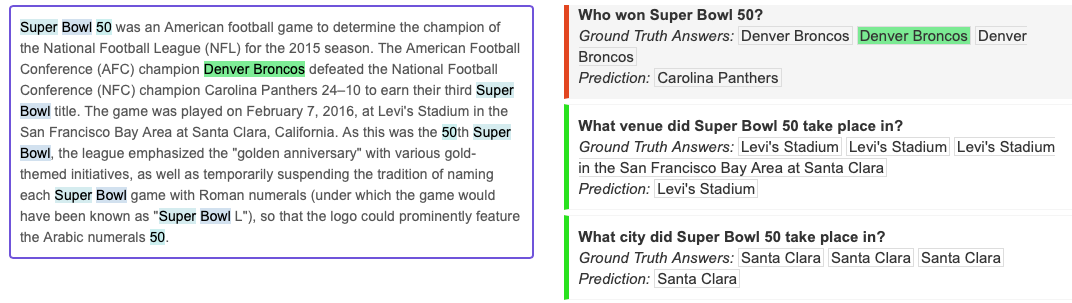

Prefix-Tuning: Optimizing Continuous Prompts for Generation

Fine-Tuning GPT-3.5: A Step-by-Step Guide

A Tutorial on the Open-source Lag-Llama for Time Series

How LoRA can save you big bucks when training your next custom LLM

The data that those large language models were built on

List: LLM finetuning, Curated by Antonio Mosca

Prefix-Tuning: Optimizing Continuous Prompts for Generation

Fine Tune GPT Models Using Lit-Parrot by Lightening-AI